Use Cases & Markets

Regulated industries face a unique paradox: AI can dramatically accelerate their work, but the cost of a mistake is measured in patient outcomes, financial penalties, and irreversible regulatory violations. LifeGraph is the knowledge and governance layer built for industries where trust matters most.

Use Cases & Markets

Regulated industries face a unique paradox: AI can dramatically accelerate their work, but the cost of a mistake is measured in patient outcomes, financial penalties, and irreversible regulatory violations. LifeGraph is the knowledge and governance layer built for industries where trust matters most.

Use Cases & Markets

Regulated industries face a unique paradox: AI can dramatically accelerate their work, but the cost of a mistake is measured in patient outcomes, financial penalties, and irreversible regulatory violations.

LifeGraph is the knowledge and governance layer built for industries where trust matters most.

WHY THIS MATTERS NOW

Agentic AI raises the stakes for every industry, not just regulated ones.

When AI moves from answering questions to taking actions, the margin for error collapses to zero. Regulated industries feel the pressure most acutely, but any organization deploying agentic AI on proprietary, sensitive, or mission-critical data faces the same fundamental need: a trusted knowledge and governance layer that grounds AI in verified truth and keeps it accountable.

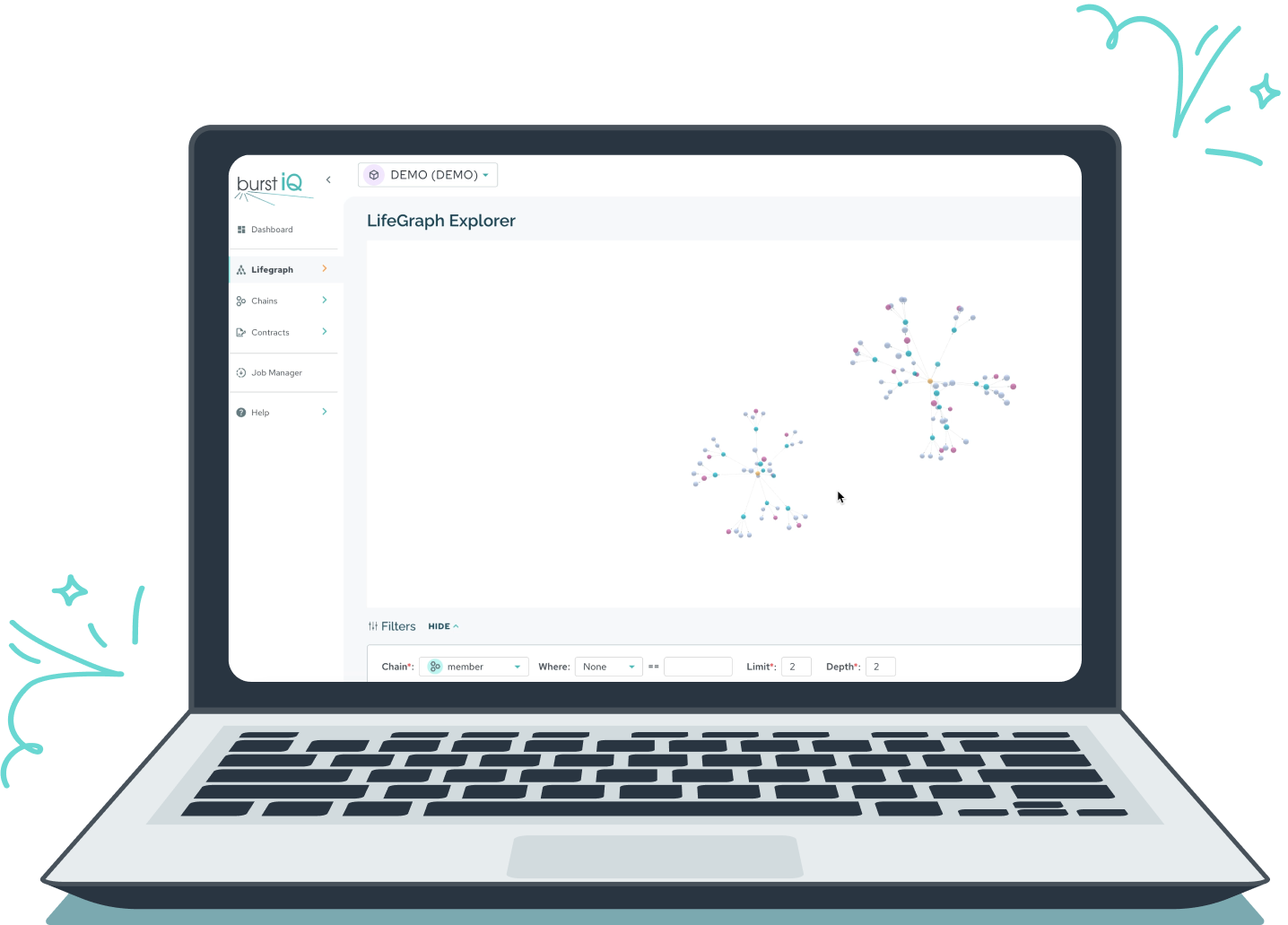

![]() LifeGraph was Built to Close These Gaps

LifeGraph was Built to Close These Gaps ![]()

WHY THIS MATTERS NOW

Agentic AI raises the stakes for every industry, not just regulated ones.

When AI moves from answering questions to taking actions, the margin for error collapses to zero. Regulated industries feel the pressure most acutely, but any organization deploying agentic AI on proprietary, sensitive, or mission-critical data faces the same fundamental need: a trusted knowledge and governance layer that grounds AI in verified truth and keeps it accountable.

![]() LifeGraph was Built to Close These Gaps

LifeGraph was Built to Close These Gaps ![]()

WHY THIS MATTERS NOW

Agentic AI raises the stakes for every industry, not just regulated ones.

When AI moves from answering questions to taking actions, the margin for error collapses to zero. Regulated industries feel the pressure most acutely, but any organization deploying agentic AI on proprietary, sensitive, or mission-critical data faces the same fundamental need: a trusted knowledge and governance layer that grounds AI in verified truth and keeps it accountable.

![]() LifeGraph was Built to Close These Gaps

LifeGraph was Built to Close These Gaps ![]()